Cipher

A memory framework for AI coding agents that retains context across conversations and sessions. Lets you preserve coding knowledge and share it with your team in real-time.

Memory-powered agent framework that provides persistent memory capabilities across conversations and sessions using vector databases and embeddings, enabling context retention, reasoning pattern recognition, and shared workspace memory for team collaboration.

What it does

- Store persistent memory across IDE sessions

- Generate AI coding memories from your codebase

- Share coding context with team members

- Switch between IDEs without losing context

- Recognize coding patterns and reasoning

- Query conversation history with vector search

Best for

About Cipher

Cipher is a community-built MCP server published by campfirein that provides AI assistants with tools and capabilities via the Model Context Protocol. Cipher empowers agents with persistent memory using vector databases and embeddings for seamless context retention and t It is categorized under ai ml, developer tools.

How to install

You can install Cipher in your AI client of choice. Use the install panel on this page to get one-click setup for Cursor, Claude Desktop, VS Code, and other MCP-compatible clients. This server runs locally on your machine via the stdio transport.

License

Cipher is released under the NOASSERTION license.

Byterover Cipher

Overview

Byterover Cipher is an opensource memory layer specifically designed for coding agents. Compatible with Cursor, Codex, Claude Code, Windsurf, Cline, Claude Desktop, Gemini CLI, AWS's Kiro, VS Code, Roo Code, Trae, Amp Code and Warp through MCP, and coding agents, such as Kimi K2. (see more on examples)

Built by Byterover team

Key Features:

- 🔌 MCP integration with any IDE you want.

- 🧠 Auto-generate AI coding memories that scale with your codebase.

- 🔄 Switch seamlessly between IDEs without losing memory and context.

- 🤝 Easily share coding memories across your dev team in real time.

- 🧬 Dual Memory Layer that captures System 1 (Programming Concepts & Business Logic & Past Interaction) and System 2 (reasoning steps of the model when generating code).

- ⚙️ Install on your IDE with zero configuration needed.

Quick Start 🚀

NPM Package (Recommended for Most Users)

# Install globally

npm install -g @byterover/cipher

# Or install locally in your project

npm install @byterover/cipher

Docker

Show Docker Setup

# Clone and setup

git clone https://github.com/campfirein/cipher.git

cd cipher

# Configure environment

cp .env.example .env

# Edit .env with your API keys

# Start with Docker

docker-compose up --build -d

# Test

curl http://localhost:3000/health

💡 Note: Docker builds automatically skip the UI build step to avoid ARM64 compatibility issues with lightningcss. The UI is not included in the Docker image by default.

To include the UI in the Docker build, use:

docker build --build-arg BUILD_UI=true .

From Source

pnpm i && pnpm run build && npm link

CLI Usage 💻

Show CLI commands

# Interactive mode

cipher

# One-shot command

cipher "Add this to memory as common causes of 'CORS error' in local dev with Vite + Express."

# API server mode

cipher --mode api

# MCP server mode

cipher --mode mcp

# Web UI mode

cipher --mode ui

⚠️ Note: When running MCP mode in terminal/shell, export all environment variables as Cipher won't read from

.envfile.💡 Tip: CLI mode automatically continues or creates the "default" session. Use

/session new <session-name>to start a fresh session.

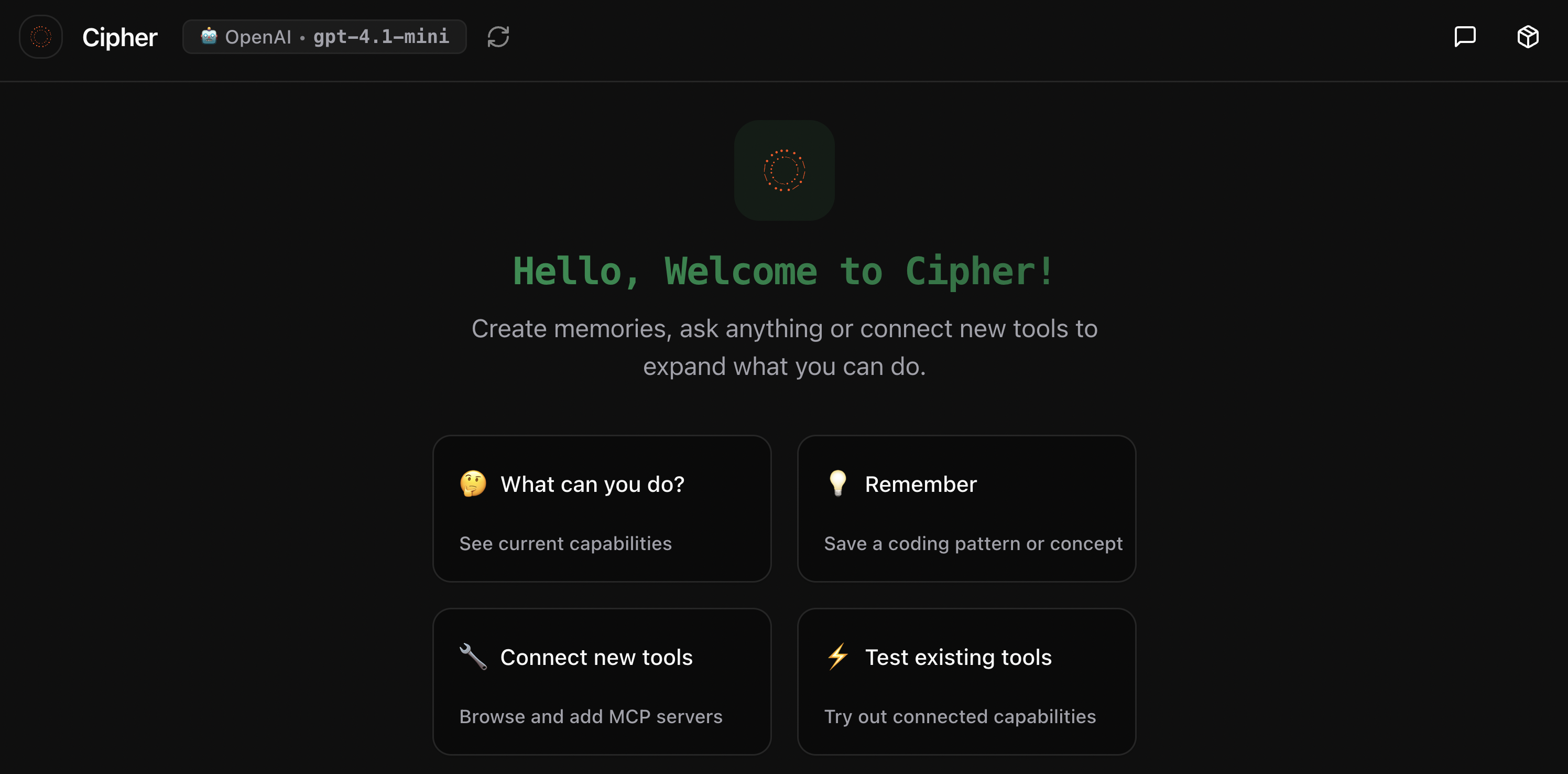

The Cipher Web UI provides an intuitive interface for interacting with memory-powered AI agents, featuring session management, tool integration, and real-time chat capabilities.

Configuration

Cipher supports multiple configuration options for different deployment scenarios. The main configuration file is located at memAgent/cipher.yml.

Basic Configuration ⚙️

Show YAML example

# LLM Configuration

llm:

provider: openai # openai, anthropic, openrouter, ollama, qwen

model: gpt-4-turbo

apiKey: $OPENAI_API_KEY

# System Prompt

systemPrompt: 'You are a helpful AI assistant with memory capabilities.'

# MCP Servers (optional)

mcpServers:

filesystem:

type: stdio

command: npx

args: ['-y', '@modelcontextprotocol/server-filesystem', '.']

📖 See Configuration Guide for complete details.

Environment Variables 🔐

Create a .env file in your project root with these essential variables:

Show .env template

# ====================

# API Keys (At least one required)

# ====================

OPENAI_API_KEY=sk-your-openai-api-key

ANTHROPIC_API_KEY=sk-ant-your-anthropic-key

GEMINI_API_KEY=your-gemini-api-key

QWEN_API_KEY=your-qwen-api-key

# ====================

# Vector Store (Optional - defaults to in-memory)

# ====================

VECTOR_STORE_TYPE=qdrant # qdrant, milvus, or in-memory

VECTOR_STORE_URL=https://your-cluster.qdrant.io

VECTOR_STORE_API_KEY=your-qdrant-api-key

# ====================

# Chat History (Optional - defaults to SQLite)

# ====================

CIPHER_PG_URL=postgresql://user:pass@localhost:5432/cipher_db

# ====================

# Workspace Memory (Optional)

# ====================

USE_WORKSPACE_MEMORY=true

WORKSPACE_VECTOR_STORE_COLLECTION=workspace_memory

# ====================

# AWS Bedrock (Optional)

# ====================

AWS_ACCESS_KEY_ID=your-aws-access-key

AWS_SECRET_ACCESS_KEY=your-aws-secret-key

AWS_DEFAULT_REGION=us-east-1

# ====================

# Advanced Options (Optional)

# ====================

# Logging and debugging

CIPHER_LOG_LEVEL=info # error, warn, info, debug, silly

REDACT_SECRETS=true

# Vector store configuration

VECTOR_STORE_DIMENSION=1536

VECTOR_STORE_DISTANCE=Cosine # Cosine, Euclidean, Dot, Manhattan

VECTOR_STORE_MAX_VECTORS=10000

# Memory search configuration

SEARCH_MEMORY_TYPE=knowledge # knowledge, reflection, both (default: knowledge)

DISABLE_REFLECTION_MEMORY=true # default: true

💡 Tip: Copy

.env.exampleto.envand fill in your values:cp .env.example .env

MCP Server Usage

Cipher can run as an MCP (Model Context Protocol) server, allowing integration with MCP-compatible clients like Codex, Claude Desktop, Cursor, Windsurf, and other AI coding assistants.

Installing via Smithery

To install cipher for Claude Desktop automatically via Smithery:

npx -y @smithery/cli install @campfirein/cipher --client claude

Quick Setup

To use Cipher as an MCP server in your MCP client configuration:

{

"mcpServers": {

"cipher": {

"type": "stdio",

"command": "cipher",

"args": ["--mode", "mcp"],

"env": {

"MCP_SERVER_MODE": "aggregator",

"OPENAI_API_KEY": "your_openai_api_key",

"ANTHROPIC_API_KEY": "your_anthropic_api_key"

}

}

}

}

📖 See MCP Integration Guide for complete MCP setup and advanced features.

👉 Built‑in tools overview — expand the dropdown below to scan everything at a glance. For full details, see docs/builtin-tools.md 📘.

Built-in Tools (overview)

- Memory

cipher_extract_and_operate_memory: Extracts knowledge and applies ADD/UPDATE/DELETE in one stepcipher_memory_search: Semantic search over stored knowledgecipher_store_reasoning_memory: Store high-quality reasoning traces

- Reasoning (Reflection)

cipher_extract_reasoning_steps(internal): Extract structured reasoning stepscipher_evaluate_reasoning(internal): Evaluate reasoning quality and suggest improvementscipher_search_reasoning_patterns: Search reflection memory for patterns

- Workspace Memory (team)

cipher_workspace_search: Search team/project workspace memorycipher_workspace_store: Background capture of team/project signals

- Knowledge Graph

cipher_add_node,cipher_update_node,cipher_delete_node,cipher_add_edgecipher_search_graph,cipher_enhanced_search,cipher_get_neighborscipher_extract_entities,cipher_query_graph,cipher_relationship_manager

- System

cipher_bash: Execute bash commands (one-off or persistent)

Tutorial Video: Claude Code with Cipher MCP

Watch our comprehensive tutorial on how to integrate Cipher with Claude Code through MCP for enhanced coding assistance with persistent memory:

Click the image above to watch the tutorial on YouTube.

For detailed configuration instructions, see the CLI Coding Agents guide.

Documentation

📚 Complete Documentation

| Topic | Description |

|---|---|

| Configuration | Complete configuration guide including agent setup, embeddings, and vector stores |

| LLM Providers | Detailed setup for OpenAI, Anthropic, AWS, Azure, Qwen, Ollama, LM Studio |

| Embedding Configuration | Embedding providers, fallback logic, and troubleshooting |

| Vector Stores | Qdrant, Milvus, In-Memory vector database configurations |

| [Chat History](./ |

README truncated. View full README on GitHub.

Alternatives

Related Skills

Browse all skillsUI design system toolkit for Senior UI Designer including design token generation, component documentation, responsive design calculations, and developer handoff tools. Use for creating design systems, maintaining visual consistency, and facilitating design-dev collaboration.

Answer questions about the AI SDK and help build AI-powered features. Use when developers: (1) Ask about AI SDK functions like generateText, streamText, ToolLoopAgent, embed, or tools, (2) Want to build AI agents, chatbots, RAG systems, or text generation features, (3) Have questions about AI providers (OpenAI, Anthropic, Google, etc.), streaming, tool calling, structured output, or embeddings, (4) Use React hooks like useChat or useCompletion. Triggers on: "AI SDK", "Vercel AI SDK", "generateText", "streamText", "add AI to my app", "build an agent", "tool calling", "structured output", "useChat".

Master API documentation with OpenAPI 3.1, AI-powered tools, and modern developer experience practices. Create interactive docs, generate SDKs, and build comprehensive developer portals. Use PROACTIVELY for API documentation or developer portal creation.

Use when working with the OpenAI API (Responses API) or OpenAI platform features (tools, streaming, Realtime API, auth, models, rate limits, MCP) and you need authoritative, up-to-date documentation (schemas, examples, limits, edge cases). Prefer the OpenAI Developer Documentation MCP server tools when available; otherwise guide the user to enable `openaiDeveloperDocs`.

Guide for building TypeScript CLIs with Bun. Use when creating command-line tools, adding subcommands to existing CLIs, or building developer tooling. Covers argument parsing, subcommand patterns, output formatting, and distribution.

Integrate Vercel AI SDK applications with You.com tools (web search, AI agent, content extraction). Use when developer mentions AI SDK, Vercel AI SDK, generateText, streamText, or You.com integration with AI SDK.