LlamaIndex

Provides access to multiple LLM providers through LlamaIndexTS for generating code, writing documentation, and answering questions directly from your MCP client.

Integrates with LlamaIndexTS to provide access to various LLM providers for code generation, documentation writing, and question answering tasks

What it does

- Generate code based on natural language descriptions

- Write code directly to specific files and line numbers

- Generate documentation for existing code

- Ask questions to various LLM providers

Best for

About LlamaIndex

LlamaIndex is a community-built MCP server published by sammcj that provides AI assistants with tools and capabilities via the Model Context Protocol. LlamaIndex integrates LlamaIndexTS to deliver AI question answer and code generation with top LLM providers for document It is categorized under ai ml, developer tools.

How to install

You can install LlamaIndex in your AI client of choice. Use the install panel on this page to get one-click setup for Cursor, Claude Desktop, VS Code, and other MCP-compatible clients. This server runs locally on your machine via the stdio transport.

License

LlamaIndex is released under the MIT license. This is a permissive open-source license, meaning you can freely use, modify, and distribute the software.

MCP LLM

An MCP server that provides access to LLMs using the LlamaIndexTS library.

Features

This MCP server provides the following tools:

generate_code: Generate code based on a descriptiongenerate_code_to_file: Generate code and write it directly to a file at a specific line numbergenerate_documentation: Generate documentation for codeask_question: Ask a question to the LLM

Installation

Installing via Smithery

To install LLM Server for Claude Desktop automatically via Smithery:

npx -y @smithery/cli install @sammcj/mcp-llm --client claude

Manual Install From Source

- Clone the repository

- Install dependencies:

npm install

- Build the project:

npm run build

- Update your MCP configuration

Using the Example Script

The repository includes an example script that demonstrates how to use the MCP server programmatically:

node examples/use-mcp-server.js

This script starts the MCP server and sends requests to it using curl commands.

Examples

Generate Code

{

"description": "Create a function that calculates the factorial of a number",

"language": "JavaScript"

}

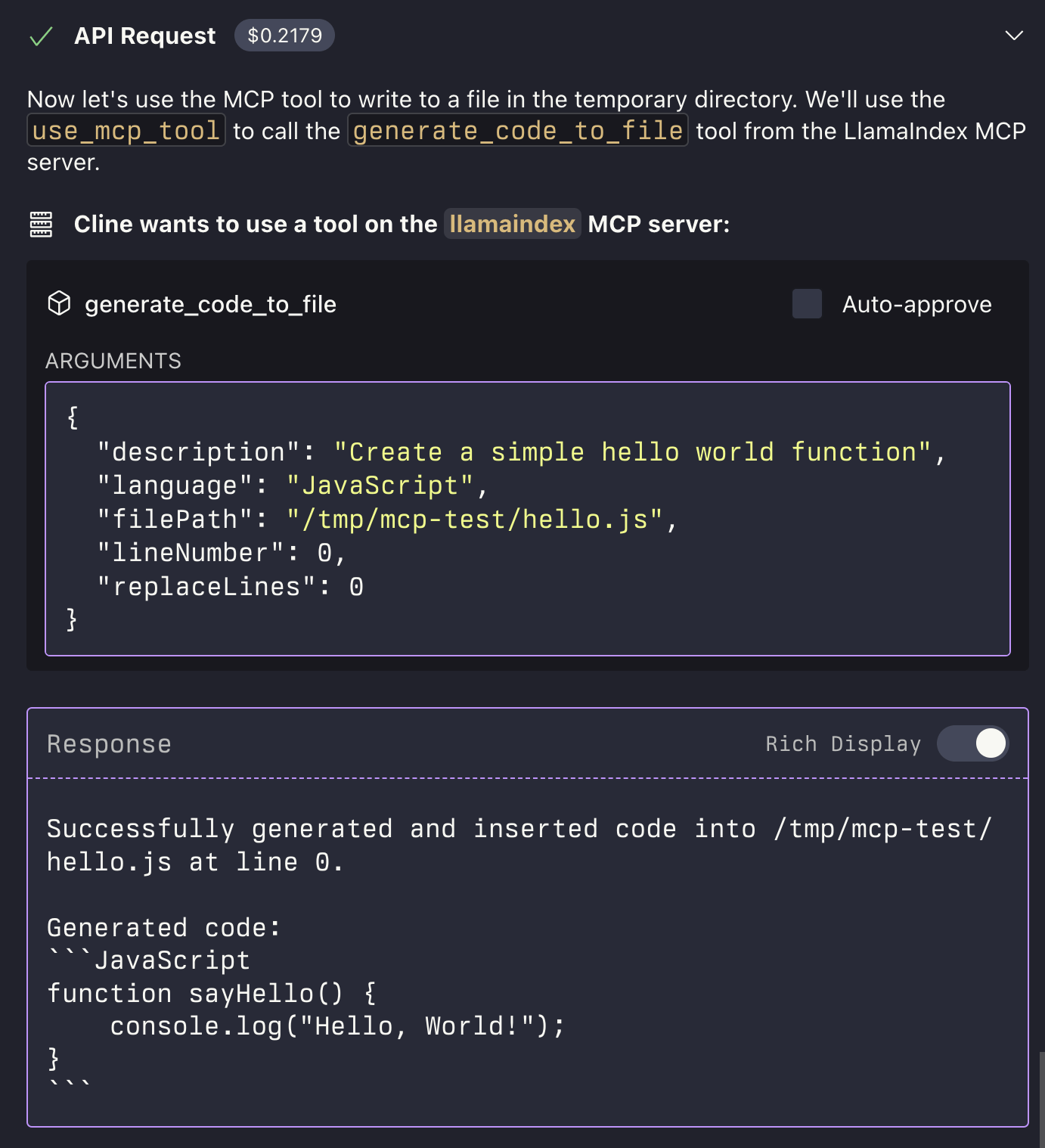

Generate Code to File

{

"description": "Create a function that calculates the factorial of a number",

"language": "JavaScript",

"filePath": "/path/to/factorial.js",

"lineNumber": 10,

"replaceLines": 0

}

The generate_code_to_file tool supports both relative and absolute file paths. If a relative path is provided, it will be resolved relative to the current working directory of the MCP server.

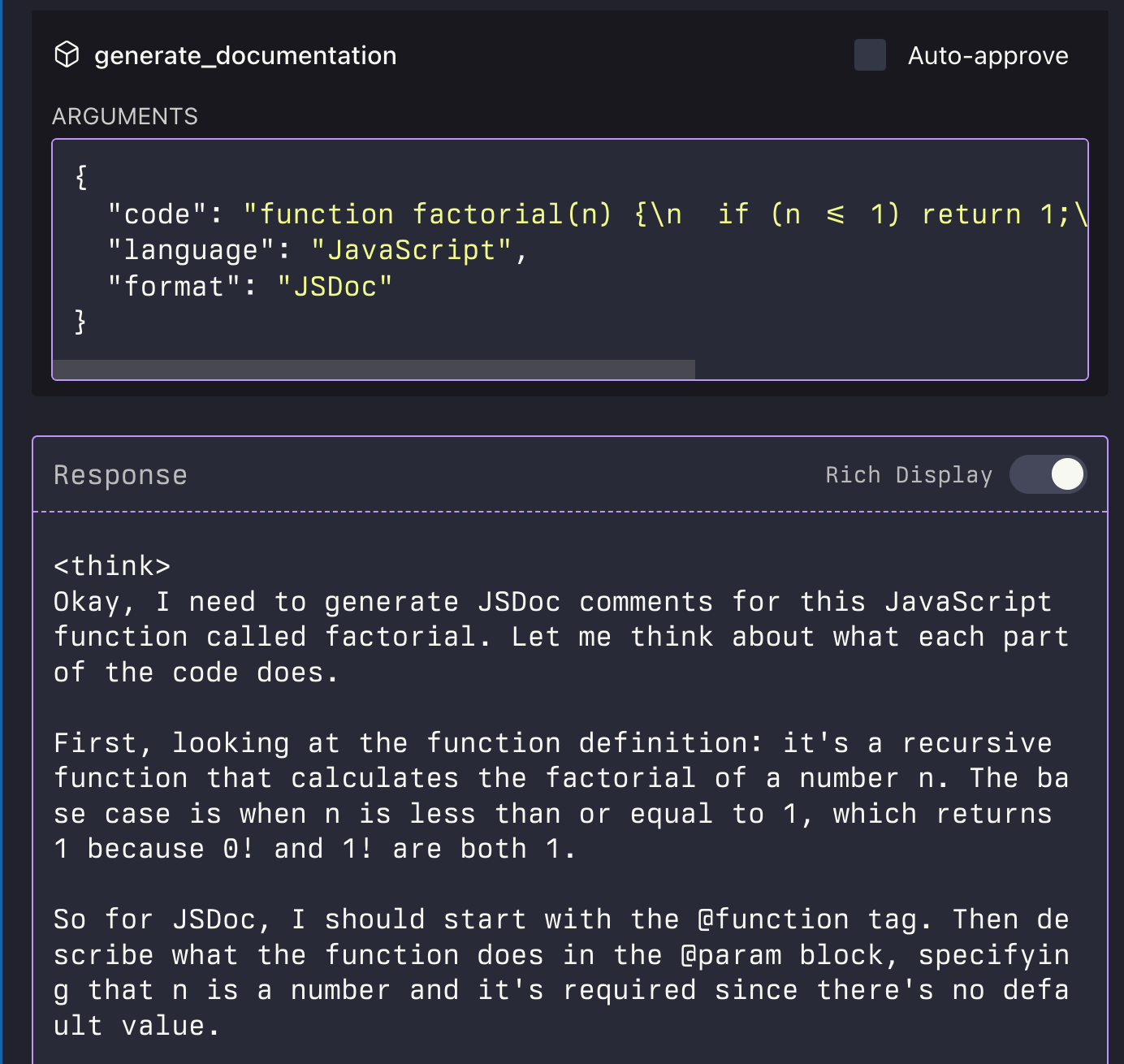

Generate Documentation

{

"code": "function factorial(n) {\n if (n <= 1) return 1;\n return n * factorial(n - 1);\n}",

"language": "JavaScript",

"format": "JSDoc"

}

Ask Question

{

"question": "What is the difference between var, let, and const in JavaScript?",

"context": "I'm a beginner learning JavaScript and confused about variable declarations."

}

License

Alternatives

Related Skills

Browse all skillsUI design system toolkit for Senior UI Designer including design token generation, component documentation, responsive design calculations, and developer handoff tools. Use for creating design systems, maintaining visual consistency, and facilitating design-dev collaboration.

Answer questions about the AI SDK and help build AI-powered features. Use when developers: (1) Ask about AI SDK functions like generateText, streamText, ToolLoopAgent, embed, or tools, (2) Want to build AI agents, chatbots, RAG systems, or text generation features, (3) Have questions about AI providers (OpenAI, Anthropic, Google, etc.), streaming, tool calling, structured output, or embeddings, (4) Use React hooks like useChat or useCompletion. Triggers on: "AI SDK", "Vercel AI SDK", "generateText", "streamText", "add AI to my app", "build an agent", "tool calling", "structured output", "useChat".

Master API documentation with OpenAPI 3.1, AI-powered tools, and modern developer experience practices. Create interactive docs, generate SDKs, and build comprehensive developer portals. Use PROACTIVELY for API documentation or developer portal creation.

Use when working with the OpenAI API (Responses API) or OpenAI platform features (tools, streaming, Realtime API, auth, models, rate limits, MCP) and you need authoritative, up-to-date documentation (schemas, examples, limits, edge cases). Prefer the OpenAI Developer Documentation MCP server tools when available; otherwise guide the user to enable `openaiDeveloperDocs`.

Guide for building TypeScript CLIs with Bun. Use when creating command-line tools, adding subcommands to existing CLIs, or building developer tooling. Covers argument parsing, subcommand patterns, output formatting, and distribution.

Integrate Vercel AI SDK applications with You.com tools (web search, AI agent, content extraction). Use when developer mentions AI SDK, Vercel AI SDK, generateText, streamText, or You.com integration with AI SDK.