Kubernetes Multi-Cluster Manager

Manages multiple Kubernetes clusters through a single interface, allowing you to run kubectl commands and access resources across different clusters without manually switching contexts.

Provides a bridge to Kubernetes multi-cluster environments for managing distributed resources through kubectl commands, service account connections, and seamless cross-cluster operations without switching contexts.

What it does

- List available Kubernetes clusters

- Connect to managed clusters with specified roles

- Execute kubectl commands across multiple clusters

- Apply YAML configurations to any cluster

- Retrieve resources from hub and managed clusters

Best for

About Kubernetes Multi-Cluster Manager

Kubernetes Multi-Cluster Manager is a community-built MCP server published by yanmxa that provides AI assistants with tools and capabilities via the Model Context Protocol. Kubernetes Multi-Cluster Manager enables seamless kubectl management across multiple clusters, connecting distributed re It is categorized under cloud infrastructure, developer tools. This server exposes 3 tools that AI clients can invoke during conversations and coding sessions.

How to install

You can install Kubernetes Multi-Cluster Manager in your AI client of choice. Use the install panel on this page to get one-click setup for Cursor, Claude Desktop, VS Code, and other MCP-compatible clients. This server runs locally on your machine via the stdio transport.

License

Kubernetes Multi-Cluster Manager is released under the MIT license. This is a permissive open-source license, meaning you can freely use, modify, and distribute the software.

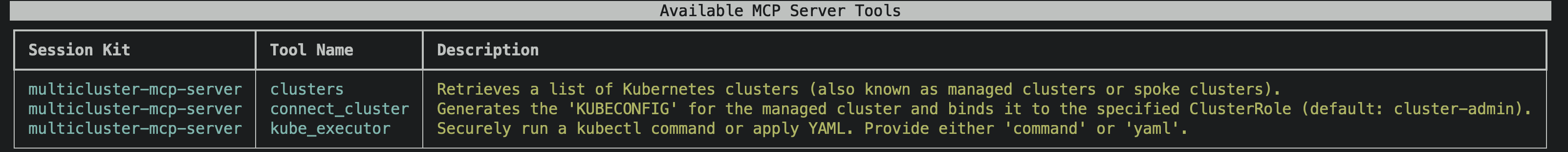

Tools (3)

Retrieves a list of Kubernetes clusters (also known as managed clusters or spoke clusters).

Generates the KUBECONFIG for the managed cluster and binds it to the specified ClusterRole (default: cluster-admin).

Securely run a kubectl command or apply YAML. Provide either 'command' or 'yaml'.

Open Cluster Management MCP Server

The OCM MCP Server provides a robust gateway for Generative AI (GenAI) systems to interact with multiple Kubernetes clusters through the Model Context Protocol (MCP). It facilitates comprehensive operations on Kubernetes resources, streamlined multi-cluster management, and delivered interactive cluster observability.

🚀 Features

🛠️ MCP Tools - Kubernetes Cluster Awareness

-

✅ Retrieve resources from the hub cluster (current context)

-

✅ Retrieve resources from the managed clusters

-

✅ Connect to a managed cluster using a specified

ClusterRole -

✅ Access resources across multiple Kubernetes clusters(via Open Cluster Management)

-

🔄 Retrieve and analyze metrics, logs, and alerts from integrated clusters

-

❌ Interact with multi-cluster APIs, including Managed Clusters, Policies, Add-ons, and more

📦 Prompt Templates for Open Cluster Management (Planning)

- Provide reusable prompt templates tailored for OCM tasks, streamlining agent interaction and automation

📚 MCP Resources for Open Cluster Management (Planning)

- Reference official OCM documentation and related resources to support development and integration

📌 How to Use

Configure the server using the following snippet:

{

"mcpServers": {

"multicluster-mcp-server": {

"command": "npx",

"args": [

"-y",

"multicluster-mcp-server@latest"

]

}

}

}

Note: Ensure kubectl is installed. By default, the tool uses the KUBECONFIG environment variable to access the cluster. In a multi-cluster setup, it treats the configured cluster as the hub cluster, accessing others through it.

License

This project is licensed under the MIT License.

Alternatives

Related Skills

Browse all skillsExpert Kubernetes architect specializing in cloud-native infrastructure, advanced GitOps workflows (ArgoCD/Flux), and enterprise container orchestration. Masters EKS/AKS/GKE, service mesh (Istio/Linkerd), progressive delivery, multi-tenancy, and platform engineering. Handles security, observability, cost optimization, and developer experience. Use PROACTIVELY for K8s architecture, GitOps implementation, or cloud-native platform design.

Build comprehensive ML pipelines, experiment tracking, and model registries with MLflow, Kubeflow, and modern MLOps tools. Implements automated training, deployment, and monitoring across cloud platforms. Use PROACTIVELY for ML infrastructure, experiment management, or pipeline automation.

Implements infrastructure as code using Terraform, Kubernetes, and cloud platforms. Designs scalable architectures, CI/CD pipelines, and observability solutions. Provides security-first DevOps practices and site reliability engineering guidance.

Deploy LangChain integrations to production environments. Use when deploying to cloud platforms, configuring containers, or setting up production infrastructure for LangChain apps. Trigger with phrases like "deploy langchain", "langchain production deploy", "langchain cloud run", "langchain docker", "langchain kubernetes".

Deploy Customer.io integrations to production. Use when deploying to cloud platforms, setting up production infrastructure, or automating deployments. Trigger with phrases like "deploy customer.io", "customer.io production", "customer.io cloud run", "customer.io kubernetes".

Deploy Deepgram integrations to production environments. Use when deploying to cloud platforms, configuring production infrastructure, or setting up Deepgram in containerized environments. Trigger with phrases like "deploy deepgram", "deepgram docker", "deepgram kubernetes", "deepgram production deploy", "deepgram cloud".