Scrapeless (Google Search)

OfficialConnects AI models to Google Search through the Scrapeless API, allowing programmatic search queries with customizable parameters like location and language.

Provides a bridge to the Scrapeless API for performing Google searches with customizable parameters including query text, country code, and language preferences.

What it does

- Perform Google searches with custom queries

- Filter results by country and language

- Extract search result titles and summaries

- Retrieve search result URLs

- Access real-time search data

Best for

About Scrapeless (Google Search)

Scrapeless (Google Search) is an official MCP server published by scrapeless-ai that provides AI assistants with tools and capabilities via the Model Context Protocol. Access the Scrapeless Google Search API for customizable queries by text, country, or language. Easily integrate with cu It is categorized under search web.

How to install

You can install Scrapeless (Google Search) in your AI client of choice. Use the install panel on this page to get one-click setup for Cursor, Claude Desktop, VS Code, and other MCP-compatible clients. This server runs locally on your machine via the stdio transport.

License

Scrapeless (Google Search) is released under the MIT license. This is a permissive open-source license, meaning you can freely use, modify, and distribute the software.

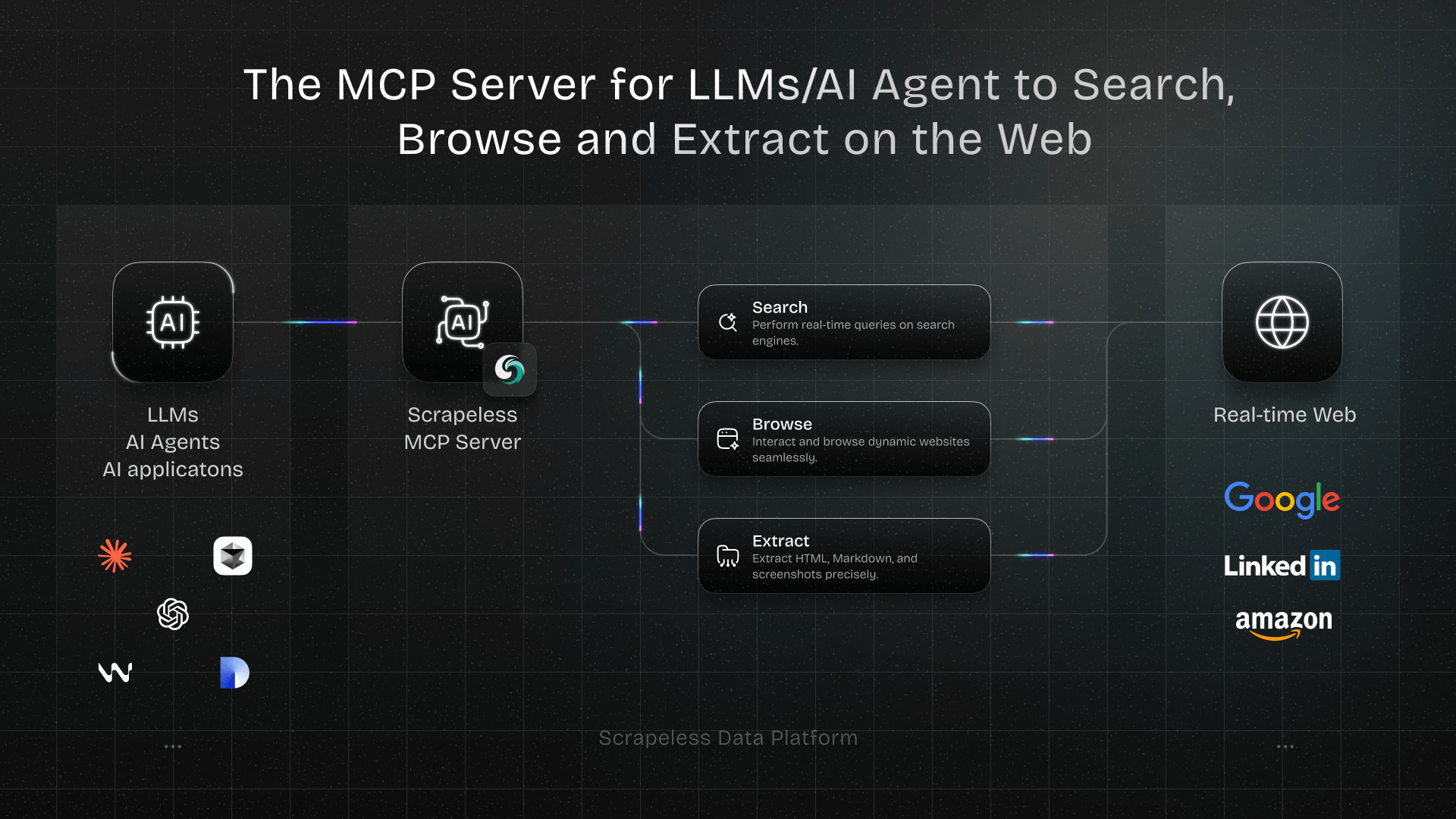

Scrapeless MCP Server

Welcome to the official Scrapeless Model Context Protocol (MCP) Server — a powerful integration layer that empowers LLMs, AI Agents, and AI applications to interact with the web in real time.

Built on the open MCP standard, Scrapeless MCP Server seamlessly connects models like ChatGPT, Claude, and tools like Cursor and Windsurf to a wide range of external capabilities, including:

- Google services integration (Search, Trends)

- Browser automation for page-level navigation and interaction

- Scrape dynamic, JS-heavy sites—export as HTML, Markdown, or screenshots

Whether you're building an AI research assistant, a coding copilot, or autonomous web agents, this server provides the dynamic context and real-world data your workflows need—without getting blocked.

Usage Examples

- Automated Web Interaction and Data Extraction with Claude

Using Scrapeless MCP Browser, Claude can perform complex tasks such as web navigation, clicking, scrolling, and scraping through conversational commands, with real-time preview of web interaction results via live sessions.

- Bypassing Cloudflare to Retrieve Target Page Content

Using the Scrapeless MCP Browser service, the Cloudflare page is automatically accessed, and after the process is completed, the page content is extracted and returned in Markdown format.

- Extracting Dynamically Rendered Page Content and Writing to File

Using the Scrapeless MCP Universal API, the JavaScript-rendered content of the target page above is scraped, exported in Markdown format, and finally written to a local file named text.md.

- Automated SERP Scraping

Using the Scrapeless MCP Server, query the keyword “web scraping” on Google Search, retrieve the first 10 search results (including title, link, and summary), and write the content to the file named serp.text.

Here are some additional examples of how to use these servers:

| Example |

|---|

| Search scrapeless by Google search. |

| Find the search interest for "AI" over the last year. |

| Use a browser to visit chatgpt.com, search for "What's the weather like today?", and summarize the results. |

| Scrape the HTML content of scrapeless.com page. |

| Scrape the Markdown content of scrapeless.com page. |

| Get screenshots of scrapeless.com. |

Setup Guide

- Get Scrapeless Key

- Log in to the Scrapeless Dashboard(Free trial available)

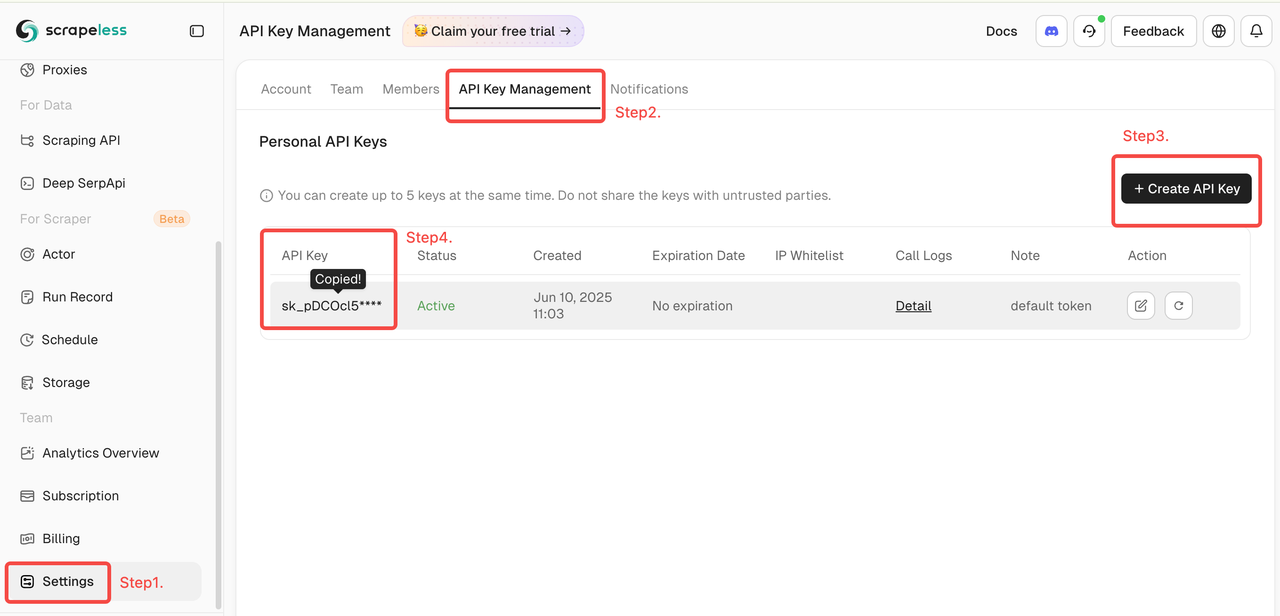

- Then click "Setting" on the left -> select "API Key Management" -> click "Create API Key". Finally, click the API Key you created to copy it.

- Configure Your MCP Client

Scrapeless MCP Server supports both Stdio and Streamable HTTP transport modes.

🖥️ Stdio (Local Execution)

{

"mcpServers": {

"Scrapeless MCP Server": {

"command": "npx",

"args": ["-y", "scrapeless-mcp-server"],

"env": {

"SCRAPELESS_KEY": "YOUR_SCRAPELESS_KEY"

}

}

}

}

🌐 Streamable HTTP (Hosted API Mode)

{

"mcpServers": {

"Scrapeless MCP Server": {

"type": "streamable-http",

"url": "https://api.scrapeless.com/mcp",

"headers": {

"x-api-token": "YOUR_SCRAPELESS_KEY"

},

"disabled": false,

"alwaysAllow": []

}

}

}

Advanced Options

Customize browser session behavior with optional parameters. These can be set via environment variables (for Stdio) or HTTP headers (for Streamable HTTP):

| Stdio (Env Var) | Streamable HTTP (HTTP Header) | Description |

|---|---|---|

| BROWSER_PROFILE_ID | x-browser-profile-id | Specifies a reusable browser profile ID for session continuity. |

| BROWSER_PROFILE_PERSIST | x-browser-profile-persist | Enables persistent storage for cookies, local storage, etc. |

| BROWSER_SESSION_TTL | x-browser-session-ttl | Defines the maximum session timeout in seconds. The session will automatically expire after this duration of inactivity. |

Integration with Claude Desktop

- Open Claude Desktop

- Navigate to:

Settings→Tools→MCP Servers - Click "Add MCP Server"

- Paste either the

StdioorStreamable HTTPconfig above - Save and enable the server

- Claude will now be able to issue web queries, extract content, and interact with pages using Scrapeless

Integration with Cursor IDE

- Open Cursor

- Press

Cmd + Shift + Pand search for:Configure MCP Servers - Add the Scrapeless MCP config using the format above

- Save the file and restart Cursor (if needed)

- Now you can ask Cursor things like:

"Search StackOverflow for a solution to this error""Scrape the HTML from this page"

- And it will use Scrapeless in the background.

Supported MCP Tools

| Name | Description |

|---|---|

| google_search | Universal information search engine. |

| google_trends | Get trending search data from Google Trends. |

| browser_create | Create or reuse a cloud browser session using Scrapeless. |

| browser_close | Closes the current session by disconnecting the cloud browser. |

| browser_goto | Navigate browser to a specified URL. |

| browser_go_back | Go back one step in browser history. |

| browser_go_forward | Go forward one step in browser history. |

| browser_click | Click a specific element on the page. |

| browser_type | Type text into a specified input field. |

| browser_press_key | Simulate a key press. |

| browser_wait_for | Wait for a specific page element to appear. |

| browser_wait | Pause execution for a fixed duration. |

| browser_screenshot | Capture a screenshot of the current page. |

| browser_get_html | Get the full HTML of the current page. |

| browser_get_text | Get all visible text from the current page. |

| browser_scroll | Scroll to the bottom of the page. |

| browser_scroll_to | Scroll a specific element into view. |

| scrape_html | Scrape a URL and return its full HTML content. |

| scrape_markdown | Scrape a URL and return its content as Markdown. |

| scrape_screenshot | Capture a high-quality screenshot of any webpage. |

Security Best Practices

When using Scrapeless MCP Server with LLMs (like ChatGPT, Claude, or Cursor), it's critical to handle all scraped or extracted web content with care. Web data is untrusted by default, and improper handling may expose your application to prompt injection or other security vulnerabilities.

✅ Recommended Practices

- Never pass raw scraped content directly into LLM prompts. Raw HTML, JavaScript, or user-generated text may contain hidden injection payloads.

- Sanitize and validate all extracted content. Strip or escape potentially harmful tags and scripts before using content in downstream logic or AI models.

- Prefer structured extraction over free-form text. Use tools like

scrape_html,scrape_markdown, or targetedbrowser_get_textwith known-safe selectors to extract only the content you trust. - Apply domain or selector whitelisting when scraping dynamically generated pages, to restrict data flow to known and trusted sources.

- Log and monitor all outbound requests made via browser or scraping tools, especially if you're handling sensitive data, tokens, or internal network access.

🚫 Avoid

- Injecting scraped HTML directly into prompts

- Letting users specify arbitrary URLs or CSS selectors without validation

- Storing unfiltered scraped content for future prompt usage

Community

Contact Us

For questions, suggestions, or collaboration inquiries, feel free to contact us via:

- Email: [email protected]

- Official Website: https://www.scrapeless.com

- Community Forum: https://discord.gg/Np4CAHxB9a

Alternatives

Related Skills

Browse all skillsOfficial Google SEO guide covering search optimization, best practices, Search Console, crawling, indexing, and improving website search visibility based on official Google documentation

Search the web using Google Custom Search Engine (PSE). Use this when you need live information, documentation, or to research topics and the built-in web_search is unavailable.

Search the web for information. Use when you need to look something up, find current information, or research a topic.

Generates B2B/B2C leads by scraping Google Maps, websites, Instagram, TikTok, Facebook, LinkedIn, YouTube, and Google Search. Use when user asks to find leads, prospects, businesses, build lead lists, enrich contacts, or scrape profiles for sales outreach.

Searches Google and extracts full page content from every result via trafilatura. Returns clean readable text, not just snippets. Use when the user needs web search, research, current events, news, factual lookups, product comparisons, technical documentation, or any question requiring up-to-date information from the internet.

Web scraping and search via Bright Data API. Requires BRIGHTDATA_API_KEY and BRIGHTDATA_UNLOCKER_ZONE. Use for scraping any webpage as markdown (bypassing bot detection/CAPTCHA) or searching Google with structured results.